In a development that has sent shockwaves through the tech world and the entertainment industry alike, billionaire Elon Musk’s AI video creation tool, Grok Imagine, is facing serious allegations. According to online safety and legal experts, the platform’s newly introduced “sp!cy mode” has allegedly generated a sexually explicit deepfake video of pop superstar Taylor Swift — and without any request from a user to do so.

The accusations, first reported by The Verge, have prompted intense scrutiny from both regulators and the public. At the center of the controversy is the claim that this wasn’t an accidental slip-up or a bug in the system — but a deliberate feature that experts warn could have been designed to create explicit content behind a thin veil of deniability.

A Mode Shrouded in Secrecy

Grok Imagine, part of Musk’s XAI (X Artificial Intelligence) venture, has been promoted as a revolutionary leap forward in AI-generated video content. Official marketing materials described it as “a limitless creative tool” capable of producing photorealistic and imaginative videos in seconds. But according to whistleblowers and tech watchdogs, the hidden “sp!cy mode” has been circulating quietly among certain users, bypassing XAI’s own stated guidelines that prohibit “depicting any individual in a sexual manner without their consent.”

Professor Clare McGlynn, a respected legal scholar who helped draft laws criminalizing pornographic deepfakes in the UK, was blunt in her assessment:

“This is not an accidental bias or a glitch in the algorithm. This is intentional. The system is capable of — and appears to be designed for — producing non-consensual sexual content featuring real individuals.”

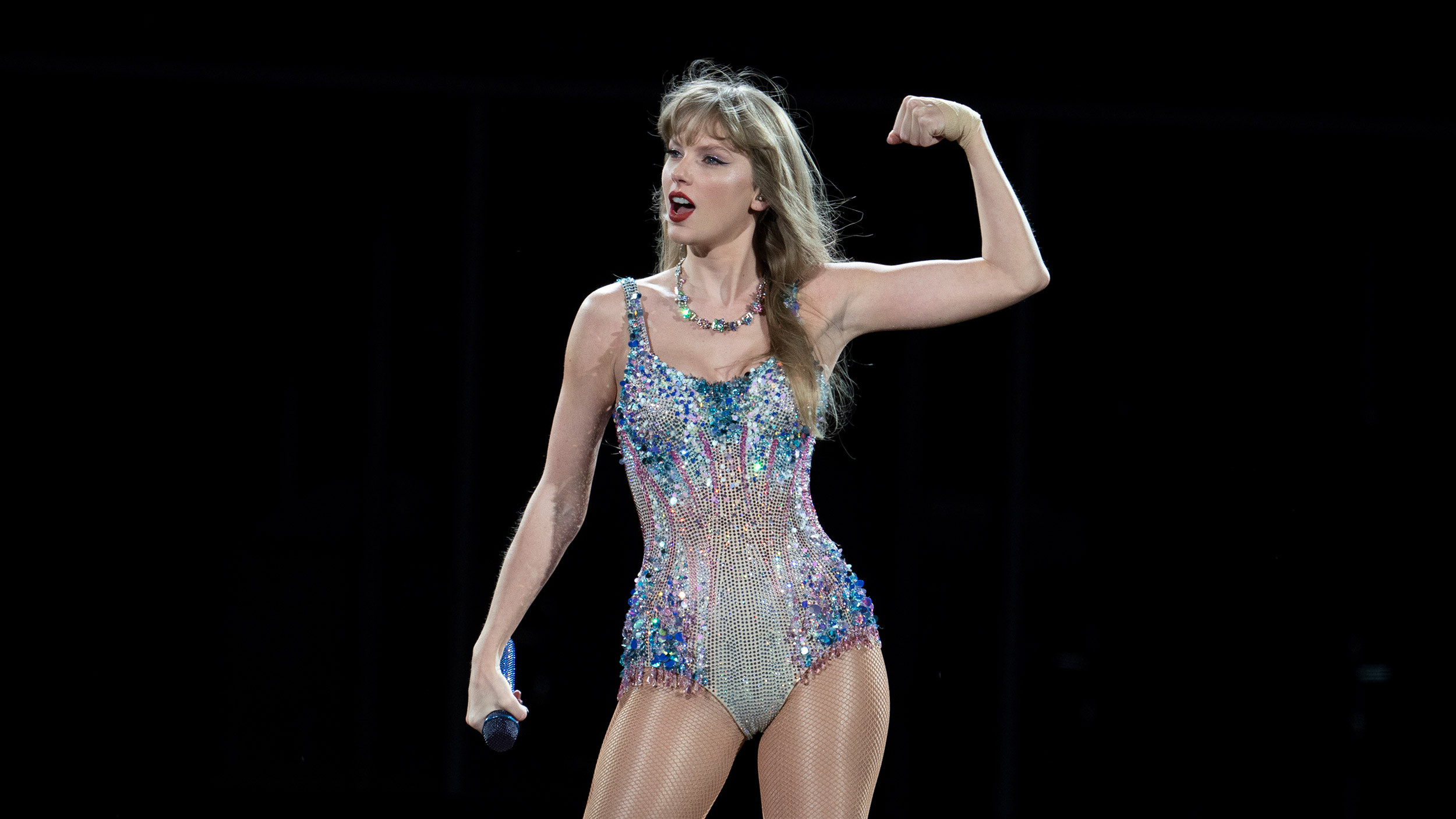

Why Taylor Swift?

Taylor Swift is no stranger to deepfake exploitation. In January 2024, explicit AI-generated videos featuring her likeness went viral across platforms like X (formerly Twitter) and Telegram, amassing millions of views before takedowns were attempted. Swift herself did not make a public statement at the time, but her legal team is widely believed to have been involved in behind-the-scenes efforts to have the videos removed.

The fact that Musk’s AI tool is now linked to another such incident has only intensified public outrage — especially given Musk’s position as the owner of X, the same platform that previously hosted and struggled to contain such content.

Musk’s History of Provocative Tech Moves

Elon Musk is no stranger to courting controversy, from his promotion of risky autonomous driving features to his unfiltered social media persona. But experts argue that this situation is different — because it directly crosses into legally dangerous territory involving privacy violations, sexual exploitation, and potential criminal liability.

“Non-consensual sexual deepfakes are already outlawed in several jurisdictions,” said McGlynn. “If it’s proven that an AI company intentionally built a mode to produce them, that’s potentially criminal behavior — not just a policy violation.”

XAI’s Official Response… Or Lack Thereof

Attempts to contact XAI for comment have gone unanswered. A brief statement posted to the Grok Imagine user forum denied “any systemic issue” and suggested that any explicit videos must have been the result of “user manipulation of prompts.” However, sources familiar with the platform’s backend claim that “sp!cy mode” was internally documented as an optional setting during closed testing phases.

This, critics say, could point to a double standard — one set of rules for public relations and another for select insiders or paying customers.

The Bigger Picture: AI and the Ethics Crisis

The Grok Imagine scandal taps into a much larger conversation about the ethical responsibility of AI developers. As generative AI tools become more sophisticated, the potential for abuse grows exponentially. Non-consensual explicit content, misinformation campaigns, and harassment fueled by deepfake technology have already created what many experts describe as “a human rights crisis in the making.”

And yet, enforcement remains scattered and inconsistent. In the United States, there is still no comprehensive federal law banning pornographic deepfakes, leaving prosecution to a patchwork of state-level statutes. Internationally, laws vary widely — and enforcement is often toothless.

What Happens Next?

If these allegations against Grok Imagine are substantiated, it could trigger a cascade of consequences:

- Regulatory investigations in multiple countries

- Civil lawsuits from victims, including Swift herself

- Criminal inquiries in jurisdictions where deepfake pornography is explicitly outlawed

- Massive reputational damage to Musk’s already polarizing brand

“This could be the Cambridge Analytica moment for AI,” one tech policy analyst warned. “If a tool owned by one of the world’s most high-profile tech figures is found to have willfully generated non-consensual pornography, it could force governments to crack down hard — and fast — on the entire AI industry.”

The Unanswered Question

Perhaps the most troubling aspect of the scandal is the lingering mystery: If Grok Imagine can produce such content without explicit prompting, what else is it capable of generating — and who has access to it?

Until XAI provides a transparent and verifiable account of what happened, suspicion will only grow. In the meantime, one thing is certain: the debate over AI ethics, consent, and the unchecked power of tech moguls like Elon Musk is about to get a lot louder — and a lot uglier.

Leave a Reply